How Crisis-Tested Leaders Structure AI-Assisted Decisions to Meet Board, Audit, and Regulatory Standards

Executive Summary

The Problem: AI capability is accelerating faster than governance, integration, and organizational readiness - and the gap is widening. Organizations are making decisions faster than they can defend them.

The Solution: Five-Pillar Framework (Authority, Assumptions, Analysis, Accountability, Auditability)

For: C-suite, Board Members, Risk Officers, Compliance Teams

Reading Time: 55 minutes | Implementation Time: 30 days

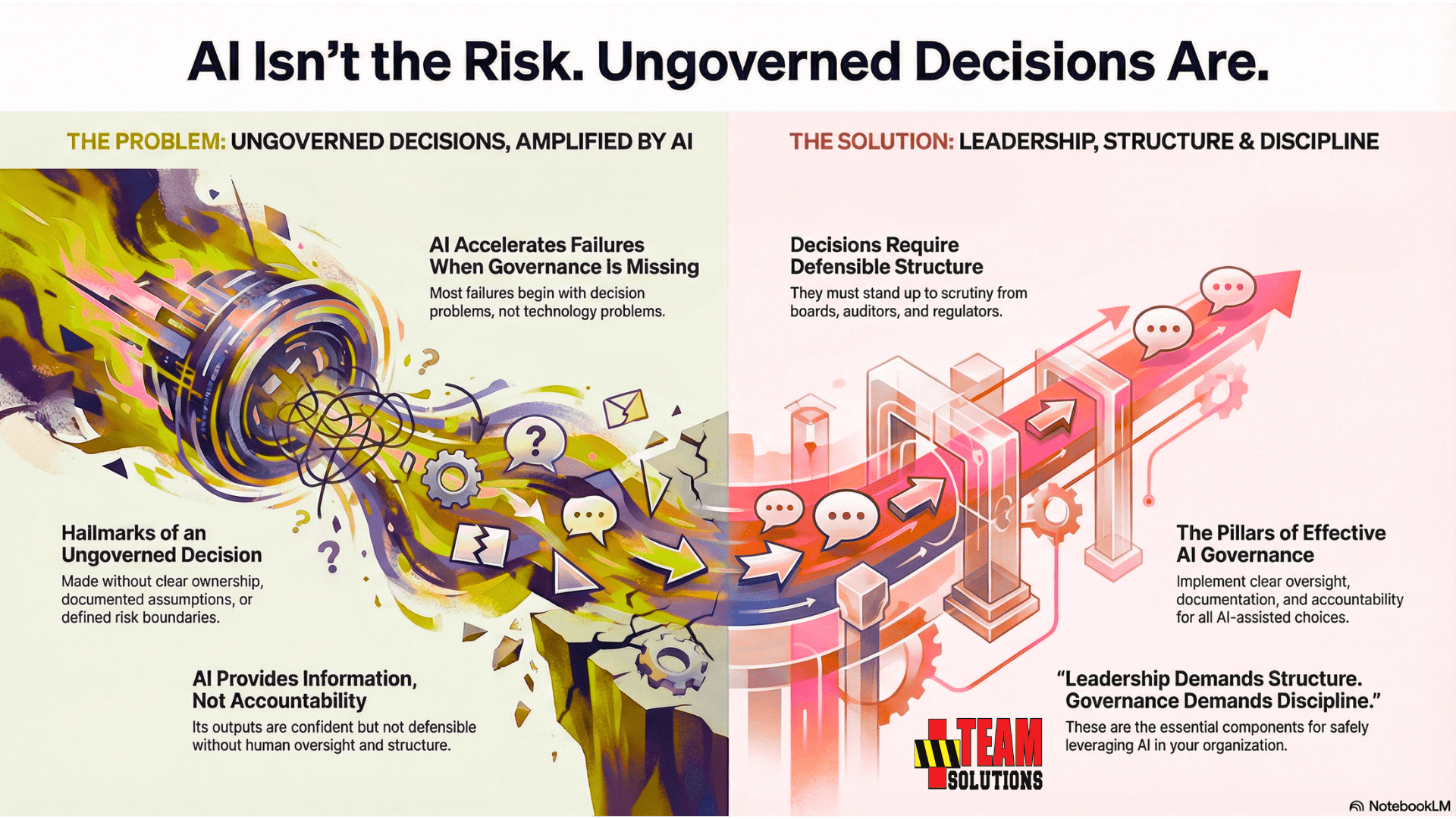

AI Will Not Break Your Company. Ungoverned Decisions Will.

Artificial intelligence has changed how organizations think, analyze, and act. It delivers speed that leaders have never had before. It generates clarity on demand. It fills gaps that once required entire teams. But there is one thing AI does not provide: governance.

Most failures in organizations do not begin with technology problems. They begin with decision problems.

- Decisions made without clear ownership.

- Decisions made without documented assumptions.

- Decisions made without risk boundaries, accountability, or any record of why the choice made sense at the time.

AI accelerates those failures when governance is missing.

Across industries, executives are relying on AI tools to support decisions that carry financial, operational, legal, and reputational consequences. These systems produce confident output, but they do not produce defensible output. They provide information, not accountability. They help people think, but they do not help leaders govern.

AI-assisted decisions remain exposed unless they meet the standards that boards, auditors, and regulators expect.

- Decisions require structure.

- They require oversight.

- They require documentation.

- They require clarity that stands up under pressure.

This guide delivers both.

What This Guide Is and How Leaders Should Use It

AI adoption has outpaced AI governance. Organizations are deploying generative models without the controls required to keep executive decisions defensible, auditable, and aligned with established governance standards. This guide exists to close that gap by giving leaders a disciplined structure for AI-assisted decisions before the consequences arrive.

This resource is designed as a practical reference for executives, board members, and decision owners who carry responsibility for outcomes. It explains what modern decision governance requires, why generic AI tools fail those requirements, and how organizations can build governance systems that match the speed and complexity of AI.

Who This Guide Serves

This guide is written for leaders who operate in environments where decisions must stand up to scrutiny. These roles face accountability from boards, auditors, regulators, investors, and the people they lead.

Primary audiences include:

These roles have different domains but share the same requirement: every material decision must be traceable, justified, and defensible.

What This Guide Covers

This guide provides a structured breakdown of the core elements required to govern AI-assisted decisions responsibly:

A. The Governance Gap

The structural void between AI-generated output and the governance standards executives must meet.

B. Why Intelligence Is Not Governance

How confident AI output fails under board and regulatory scrutiny.

C. Governance Requirements

The non-negotiable elements that make an AI-assisted decision safe, defensible, and audit-ready.

D. Why Generic AI Fails Governance

The structural limitations of ChatGPT, Claude, Gemini, and Meta AI for high-stakes decisions.

E. Human-in-the-Loop (HITL) Expectations

Why oversight cannot be optional and how executives should structure it.

F. Risk and Liability Considerations

The new exposure categories introduced by generative AI in operational, financial, and compliance domains.

G. The Five-Pillar Governance Framework

Authority, Assumptions, Analysis, Accountability, Auditability—the foundation for every AI-assisted decision.

H. Evaluation Criteria for AI Systems

How to determine when AI is appropriate, when it becomes a liability, and what standards it must meet.

I. High-Stakes Use Cases

Where organizations are already relying on AI in ways that outpace their governance.

J. What Good Governance Looks Like

Practical examples of structured decision-making that meets executive standards.

K. The Future of Decision Governance

Why governance becomes a competitive advantage as AI becomes embedded in executive work.

This guide establishes the standards. The organization must enforce them.

How to Use This Guide

Executives do not read long documents linearly. They read with intent.

This guide is structured accordingly.

Use it in three ways:

Jump directly to the section relevant to the decision at hand. Every section stands alone.

Use the five-pillar governance framework and evaluation criteria to test whether current AI-assisted decisions meet required standards.

Apply the governance requirements to build internal policy, align teams, and structure oversight around AI-supported work.

This guide provides clarity for the top of the organization and direction for the teams responsible for implementing governance controls.

Why This Guide Matters Now

Organizations are already using AI for:

Leaders often assume these are low-risk uses.

They are not.

Every one of these domains carries:

Without governance, AI creates a false sense of clarity.

Decisions made in that environment become difficult to defend later.

This guide exists to give executives the structure required to prevent those failures.

About the Author

This framework is built from nearly two decades of crisis leadership experience, where decisions made under pressure must be defensible under scrutiny.

The Governance Gap: Where AI-Assisted Decisions Break

Most organizations adopt AI to speed up analysis, sharpen insight, or reduce workload.

Those benefits are real. The risk is real as well.

The danger does not come from the technology. The danger comes from decisions made faster than they are governed.

The Governance Gap is the space between what AI produces and what organizations actually need to make defensible decisions.

It appears when executives rely on AI output without the structures that boards, auditors, and regulators expect. This gap is often invisible until a decision is questioned, challenged, or reviewed after the fact.

Definition of the Governance Gap

The Governance Gap is the mismatch between:

What AI tools provide:

Information, summaries, suggestions, analysis, and options.

What leaders are accountable for:

Ownership, assumptions, risk boundaries, oversight, documentation, and decision defensibility.

- AI accelerates the thinking process.

- Executives are still accountable for the decision-making process.

The gap between those two realities is where organizations are exposed.

Why the Governance Gap Exists

The gap exists for practical reasons:

1. AI is built for interaction, not accountability

Models like ChatGPT, Claude, Gemini, and Meta AI create fluid conversation and helpful output. They were never built to assign responsibility, track assumptions, or record decision history.

2. AI produces confidence, not justification

Confident answers are not governed answers. Boards and auditors reject confidence without structure.

3. AI makes decisions appear simpler than they are

When a complex issue is reduced to a neat paragraph, leaders underestimate risk, timing, and interdependencies.

4. AI hides the assumptions behind the output

The model does not reveal what it ignored, misinterpreted, or weighted incorrectly.

5. Organizations do not yet have formal AI decision policies

Most companies have AI usage guidelines but lack AI decision governance standards. The two are not the same.

6. Leaders assume AI output is traceable

It is not. Without governance, there is no record of what was assumed, what was considered, or why a direction was chosen.

The Governance Gap is a structural problem, not a technical one.

Real-World Example:

The Budget Reallocation That Couldn't Be Defended

A mid-sized healthcare organization used AI to analyze departmental budget performance and generate reallocation recommendations. The CFO reviewed the output, found it logical, and approved a 15% shift in Q3 funding from facilities to IT infrastructure.

Three months later, the board asked for the rationale behind the decision during an operational review. The CFO could not produce:

- The assumptions the AI used about facility utilization trends

- The risk assessment of delayed maintenance

- The scenario analysis for worst-case infrastructure failures

- The documentation of who approved the decision and why

The AI-generated analysis had been deleted. The decision record did not exist. The board flagged the incident as a governance failure, and internal audit opened a review of all AI-assisted financial decisions.

The reallocation itself was reasonable. The lack of governance made it indefensible.

How AI Accelerates the Governance Gap

AI creates speed, volume, and clarity at levels humans cannot match. That is the advantage.

It is also the multiplier of risk when governance is absent.

AI accelerates:

When AI speeds up the wrong part of the process, leaders reach conclusions faster than they validate them.

The result is not improved decision-making.

The result is faster misalignment.

Where Organizations Fail Most Often

Patterns appear across industries:

1. Decisions without ownership

AI presents an answer. No one explicitly owns the decision that follows.

2. Assumptions never documented

Teams cannot reconstruct why a decision seemed reasonable at the time.

3. Risk discussed informally

Risks appear in conversation but never land in a structured format.

4. No scenario range

AI often provides a single path. Executives need best, likely, and worst.

5. No review gates

Decisions drift because nothing forces re-evaluation at fixed moments.

6. No kill metrics

Leaders do not define thresholds where action must stop or shift.

7. No audit trail

If something goes wrong, the organization has no defensible record.

These breakdowns do not happen because leaders are careless. They happen because AI appears to make decisions easier than they are.

Early Warning Signs of a Governance Gap

Executives can spot a developing governance gap by watching for:

When these patterns emerge, the organization is already exposed.

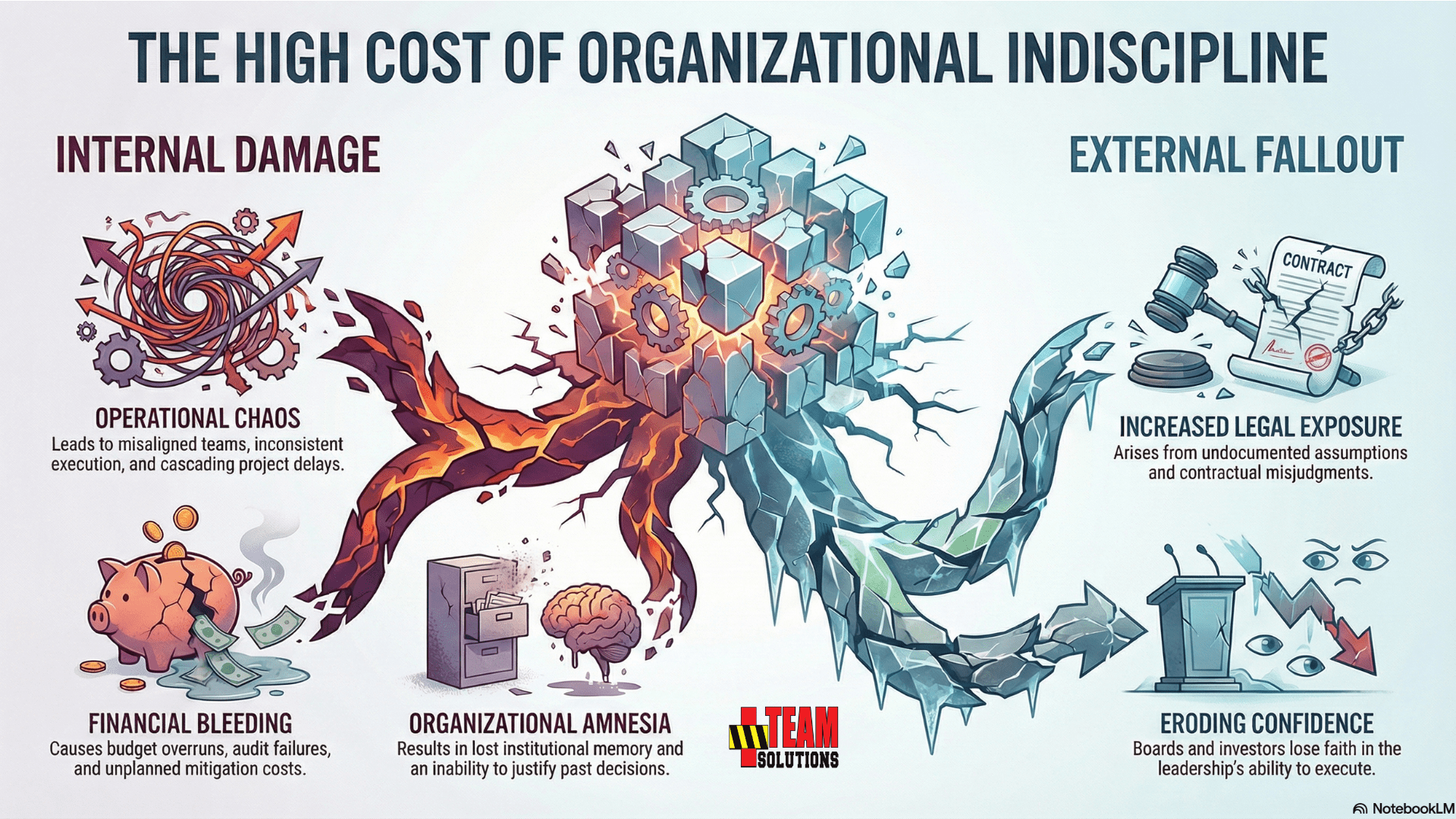

Consequences Leaders Often Overlook

The Governance Gap creates problems that are not visible until confronted by pressure.

Misaligned teams, inconsistent execution, stalled projects, and cascading delays.

Budget overruns, audit failures, risk findings, and unplanned mitigation costs.

Exposure from undocumented assumptions, HR decisions, compliance interpretations, and contractual misjudgments.

Boards and investors lose confidence in leadership discipline.

Loss of institutional memory and an inability to justify past decisions.

Every one of these failures begins with the same blind spot: believing AI output is "good enough" without governance.

Why Leaders Miss the Gap

Leaders underestimate the Governance Gap because:

AI changes speed. It does not change responsibility.

Without governance, AI-assisted decisions are fast, clear, and exposed.

Red Flags: 15 Warning Signs of Governance Failure

If your organization exhibits three or more of these warning signs, you have a governance gap that will eventually become a governance failure.

The question is not whether exposure exists. The question is when it will be discovered.

1. No Named Decision Owner in Meeting Notes

When meeting minutes document that "the team decided" or "we agreed to move forward" without identifying who owns the final call, authority has failed.

Decisions without owners cannot be defended. When outcomes differ from expectations, collective responsibility becomes collective blame. No one can explain the reasoning because no one was accountable for the reasoning.

If your meeting notes do not name a decision owner, governance does not exist.

2. "AI Recommended It" Used as Justification

The moment an executive or manager uses "the AI tool suggested this approach" as the primary justification for a decision, accountability has collapsed.

AI does not accept responsibility. AI cannot testify under oath. AI cannot explain its reasoning to a board or auditor. When a decision fails and the only defense is "we followed the AI's recommendation," the organization has no defensible position.

AI informs decisions. Humans own them. If your team confuses the two, you are exposed.

3. Assumptions Buried in Appendices or Omitted Entirely

Decisions presented to leadership without a clearly documented assumptions section are time bombs.

When assumptions are invisible, no one can challenge them before action begins. When the decision fails, no one can reconstruct whether the original logic was sound or whether conditions changed. Governance requires assumptions to be explicit, tested, and preserved.

If assumptions are not in the first three pages of your decision brief, they will not be reviewed.

4. No Kill Metrics Defined Before Launch

Organizations that approve major initiatives without defining objective thresholds that trigger reassessment or termination are operating without accountability.

Kill metrics are not pessimism. They are discipline. They prevent sunk-cost bias from forcing teams to continue failing strategies because "we already invested so much." Without predefined stop conditions, every decision becomes permanent until catastrophic failure forces reversal.

If you cannot name the metric that would stop the initiative, you cannot govern the initiative.

5. Board Asks "Who Approved This?" and Gets Silence

When a board or audit committee questions a decision and the response involves multiple people pointing to each other, authority was never established.

This silence reveals that no single person was empowered to make the call, documented the reasoning, or accepted ownership of the outcome. Governance cannot be reconstructed after the fact. The record either exists or it does not.

If the board has to ask who decided, governance failed before the decision was made.

6. Decisions Reversed Within 90 Days Without Documented Triggers

Rapid reversals without clear documentation of what changed signal that the original decision lacked structured analysis.

Changing course is not failure. Changing course without knowing why the original path was wrong is governance failure. Organizations that reverse decisions should be able to produce documentation showing which assumption collapsed, which risk materialized, or which threshold was crossed.

If your reversal explanation is "it just wasn't working," your governance is broken.

7. Risk Sections Contain Only Positive Scenarios

Decision briefs that frame risks as "potential upside if we move faster" or "opportunity cost of delay" are not conducting risk analysis. They are advocacy disguised as governance.

True risk analysis includes worst-case scenarios, mitigation requirements, and probability assessments. If every risk identified has a positive framing, the analysis is not objective. It is confirmation bias documented as due diligence.

Risk analysis that does not include failure scenarios is marketing, not governance.

8. No Documented Human-in-the-Loop Checkpoints

AI-assisted decisions without defined review gates, approval requirements, or oversight timing are operating on autopilot.

Governance demands that someone with authority reviews the AI output, validates the assumptions, challenges the analysis, and approves the direction before action begins. If this step is not documented, it did not happen.

If your process has no formal checkpoint between AI output and decision execution, you have no governance.

9. Same Person Provides Analysis and Approval

When the individual who conducted the AI-assisted analysis is also the person who approves the decision without independent review, accountability has failed.

This structure eliminates the challenge function. No one with authority and distance from the analysis is testing assumptions, questioning risks, or validating scenarios. Self-approval is not governance. It is rubber-stamping.

Governance requires separation between analysis and approval. Without it, oversight does not exist.

10. Audit Requests Met With Scrambling for Documentation

Organizations that respond to internal audit or board requests by frantically assembling post-hoc justifications do not have governance systems. They have storytelling exercises.

When decisions are properly governed, the audit trail exists before anyone asks for it. Authority is documented. Assumptions are recorded. Analysis is structured. Accountability is clear. Auditability is automatic.

If producing the decision record requires detective work, the decision was never governed.

11. Generic AI Outputs Copied Into Decision Briefs Without Attribution

Decision documents that include AI-generated text without clearly marking it as AI-generated or documenting the prompts used are masking the source of recommendations.

This practice creates false confidence. Readers assume the analysis reflects human judgment and organizational context when it actually reflects statistical pattern matching. When assumptions collapse or analysis proves flawed, no one can reconstruct what the AI was asked or what constraints existed.

Unmarked AI content in decision briefs is intellectual fraud disguised as due diligence.

12. Material Decisions Made in Slack or Email Without Formal Process

Chat-based decision-making bypasses governance by design.

When major commitments, budget approvals, or strategic pivots occur in threaded conversations without formal documentation, authority is unclear, assumptions are scattered, analysis is non-existent, accountability is ambiguous, and auditability is impossible.

Informal channels are appropriate for discussion. They are not appropriate for decisions that carry material consequences.

If your most important decisions happen in Slack, your governance exists in Slack. That is not governance.

13. Executives Cannot Explain Decisions Made 60 Days Ago

When leadership is asked to reconstruct the reasoning behind a recent major decision and cannot produce clear answers, the organization is operating without institutional memory.

This failure reveals that decision logic was never documented, assumptions were never recorded, or the decision record was never preserved. Governance ensures that anyone reviewing a decision six months later can understand why it made sense at the time.

If you cannot explain past decisions, you cannot defend current decisions.

14. AI Tool Changes and No One Updates the Governance Process

Organizations that switch from ChatGPT to Claude or from Gemini to a custom LLM without reassessing their decision governance are treating AI as interchangeable when it is not.

Different AI tools have different strengths, weaknesses, training data, and failure modes. Governance processes must account for these differences. Assuming that governance designed for one AI system automatically applies to another is negligent.

Tool changes demand governance reviews. If your process does not account for this, your governance is fragile.

15. Leadership Treats Governance as Optional for "Urgent" Decisions

The moment an executive says "we do not have time for the full process on this one," governance has been subordinated to urgency.

This exception always becomes the rule. Once leadership signals that speed justifies bypassing governance, every decision becomes urgent. The framework collapses. Accountability disappears.

Urgent decisions still require authority, assumptions, analysis, accountability, and auditability. The format may compress. The timeline may accelerate. But the governance requirements do not vanish.

If governance is optional when decisions matter most, governance is theater, not protection.

How to Use This List

Conduct a governance audit using these fifteen warning signs as diagnostic criteria:

- 0-2 red flags present: Your governance is functional but should be formalized to prevent drift

- 3-5 red flags present: You have systemic gaps that will eventually produce a material failure

- 6-10 red flags present: Governance failure is not a risk. It is a certainty. Implementation is urgent.

- 11+ red flags present: You are operating without governance. Every AI-assisted decision is exposed.

The warning signs are not theoretical. They are observable evidence that the Five Pillars framework is absent or compromised.

Governance failures are never surprising in hindsight. They are always predictable. The question is whether you identify them before or after the failure occurs.

Intelligence Is Not Governance: Why AI Output Fails Under Scrutiny

AI has created a dangerous illusion inside organizations. When output looks polished, people assume it must be correct. When the answer is delivered confidently, they assume it must be reliable. When the explanation is logical, they assume it must be ready for action.

None of these assumptions hold up once a decision reaches a boardroom, an auditor, or a regulator.

AI models excel at producing language that feels authoritative.

Governance demands evidence that the decision behind that language can survive scrutiny.

These are not the same thing, and executives who treat them as interchangeable increase their exposure every time they rely on AI for high-stakes work.

The Illusion of Authority

AI systems produce answers that appear:

- Fluent

- Confident

- Structured

- Polished

- Complete

These qualities mimic executive reasoning but do not replicate it.

The appearance of authority is not the presence of governance.

Where the illusion breaks:

- The model does not reveal what information it ignored

- It does not record what assumptions it made

- It does not assign responsibility to a decision owner

- It cannot validate whether the logic holds under real-world constraints

- It cannot account for the organizational, political, or regulatory context

Executives often mistake strong writing for strong reasoning. AI is trained to deliver the former, not guarantee the latter.

Confidence Is Not Accuracy

AI models are trained to produce the most probable next word. They are not trained to produce the most defensible decision.

As a result:

- A confident answer can be inaccurate

- A clear answer can be incomplete

- A logical answer can be misaligned with risk

- A helpful answer can be unsafe to use

- A fast answer can be premature

Boards and auditors do not care how confident the output sounds. They care whether the decision can be justified.

AI cannot supply that justification without governance controls around it.

Real-World Example: The Compliance Interpretation That Failed Audit

A financial services company used AI to interpret new regulatory guidance on customer data retention. The AI provided a clear, confident summary suggesting the organization had 18 months to comply with new archival requirements.

The compliance team accepted the interpretation and adjusted their implementation timeline accordingly.

During the annual audit, the external auditor challenged the 18-month timeline. The actual regulatory text required compliance within 12 months for certain customer categories. The AI had conflated two different sections of the guidance.

The organization faced:

- A compressed implementation timeline

- Emergency budget reallocation

- Audit findings on compliance controls

- Board questions about decision oversight

The AI output was confident and well-written. It was also wrong. The compliance team had no governance process to validate AI-generated regulatory interpretations before acting on them.

AI Output Collapses Under Scrutiny

Once an AI-generated recommendation is questioned, its weaknesses become obvious.

Common breakdowns include:

These failures do not appear when reading the output. They appear when defending it.

Insight Is Not a Decision

AI can generate options, analysis, and summaries.

It cannot:

Insight without responsibility is not a decision.

It is raw material for a decision.

Executives are responsible for the final call, not the model that generated the insight.

AI Cannot Account for Organizational Reality

Real decisions involve variables the model cannot see:

AI evaluates problems in a vacuum. Executives operate in an environment full of constraints the model cannot interpret.

Governance exists to ensure decisions reflect real conditions, not just clean analysis.

Traceability Is Mandatory for Executive Decisions

When a decision is reviewed, leaders must be able to show:

AI does not provide this unless the organization imposes structure around it.

Traceability is not an academic exercise. It is the foundation of defensibility.

Governance Is the Missing Layer

Intelligence alone does not:

Governance does all of these.

AI accelerates thinking. Governance anchors that thinking so it does not drift into risk.

This is the difference every organization must understand before integrating AI into critical decisions.

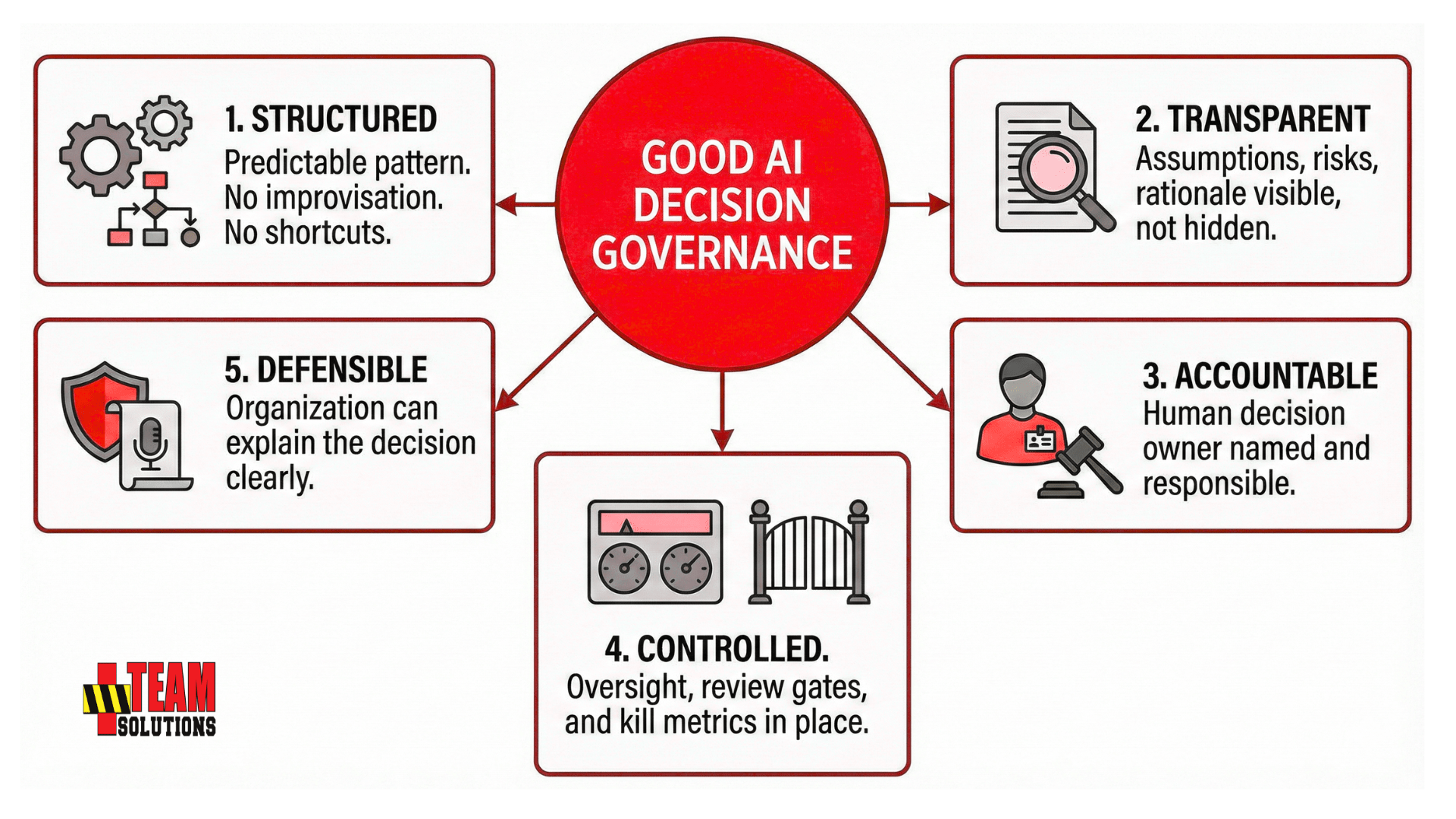

What Executive-Grade Decision Governance Requires

Executive decisions are not casual events. They carry financial risk, operational impact, legal exposure, and reputational consequences. In these environments, the standard for decision governance has always been high.

AI does not lower that standard. It raises it.

Modern AI tools give organizations speed, clarity, and analytical reach. Governance ensures those advantages do not turn into liabilities.

Decision governance is not a preference or a leadership style. It is the set of controls that keep decisions defensible when the environment becomes uncertain, the facts shift, or the decision is later questioned.

This section defines the ten non-negotiable requirements that executive-grade decisions must meet, regardless of whether AI is involved.

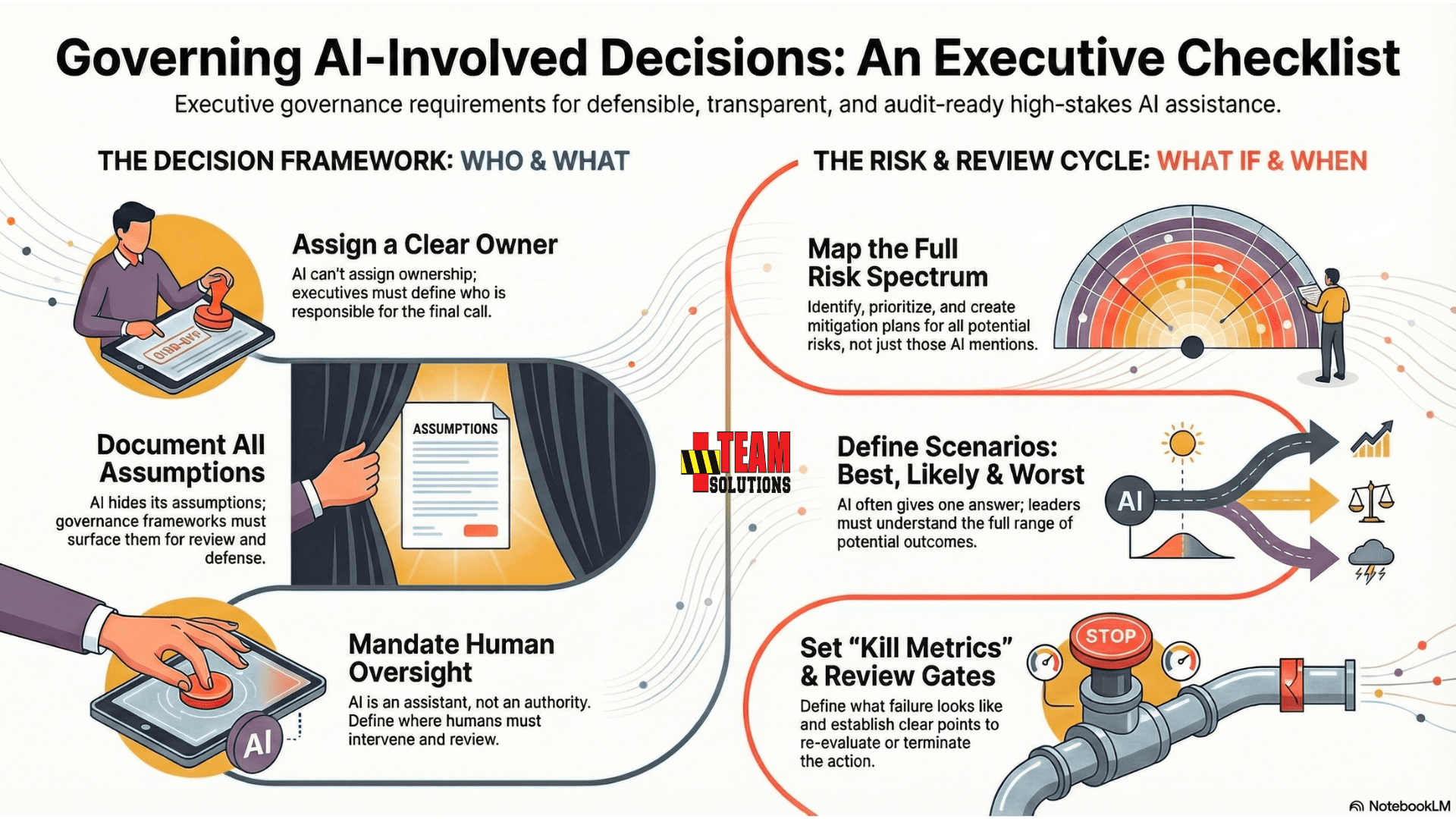

1. Clear Decision Ownership

A decision without an owner is a risk waiting to mature.

Ownership defines:

- Who is responsible for the final call

- Who is accountable for outcomes

- Who must be involved in oversight

- Who answers for the decision when challenged

AI cannot assign ownership. Executives must.

Without a named decision owner, accountability dissolves and ambiguity spreads across teams.

2. Documented Assumptions

Assumptions drive decisions more than data.

If assumptions are missing, undocumented, or unchallenged, the decision becomes impossible to defend later.

Organizations must document:

- What was believed at the time

- What constraints were assumed

- What conditions were expected

- What uncertainties existed

AI systems hide their assumptions. Governance frameworks surface them.

Executives who cannot articulate assumptions cannot defend decisions.

3. Risk Identification

No decision is complete without a clear understanding of risk.

Risk must be:

- Identified

- Recorded

- Categorized

- Assigned

AI often mentions risks informally. Executives must formalize them.

Governance requires that risk be visible before action begins, not after the consequences arrive.

4. Risk Prioritization

Not all risks are equal.

Leaders must know:

- What can be tolerated

- What must be mitigated

- What threatens objectives

- What requires immediate escalation

AI does not apply enterprise-level risk weighting. It treats all risks as narrative details.

Governance sorts risk by impact and urgency, not by whether the model happened to mention it.

5. Mitigation Plans With Validation Triggers

A mitigation plan is only useful if the organization knows when to activate it.

Executives must establish:

- Mitigation steps

- Responsible parties

- Timelines

- Validation triggers

- Conditions that require escalation

AI cannot integrate mitigation into operational reality without governance.

A mitigation plan without triggers is guesswork.

6. Scenario Range: Best, Likely, and Worst

Executives make decisions based on range, not single-path answers.

Governance requires:

- Best-case scenarios

- Most likely outcomes

- Worst-case consequences

- Risk boundaries for each scenario

AI tends to offer a single recommendation. Governance requires understanding variance, not optimism.

Boards expect scenario range. Compliance expects it. Auditors expect it. AI will not produce it unless asked and structured.

7. Review Gates

Every high-impact decision needs defined points for re-evaluation.

Review gates specify:

- When the decision must be revisited

- What conditions trigger review

- Who is responsible for oversight

- What data is required at each checkpoint

AI does not know when a decision should be re-evaluated. Governance sets these expectations from the start.

Review gates prevent drift and keep decisions aligned with reality as it changes.

8. Kill Metrics

Kill metrics define the threshold where action must stop immediately.

Executives must articulate:

- What failure looks like

- What thresholds terminate the plan

- Who makes the stop-call

- How exceptions are handled

AI cannot define organizational risk limits. Governance must.

Kill metrics protect organizations from doubling down on failing decisions.

9. Human-in-the-Loop Requirements

AI is an assistant, not an authority.

Human oversight must be explicit, structured, and mandatory in high-stakes environments.

HITL requirements define:

- Where humans intervene

- Who must review the decision

- What cannot be delegated to AI

- How oversight is documented

Without HITL, responsibility becomes blurred. Boards, regulators, and auditors consider this a governance failure.

10. Audit-Ready Documentation

Executives must assume every decision may need to be explained later.

Audit-ready documentation includes:

- The decision owner

- The problem definition

- Assumptions

- Risks

- Scenarios

- Mitigations

- Oversight structure

- Timelines

- Kill metrics

- Review gates

- Rationale for the final direction

AI does not create a governance record. Organizations must impose one.

Without an audit trail, decisions cannot be defended, reconstructed, or improved.

The Standard Does Not Change Because AI Is Involved

Leaders remain accountable for:

AI accelerates the thinking. Governance ensures the thinking is safe.

Executives who rely on AI without these controls are not gaining efficiency. They are inheriting risk.

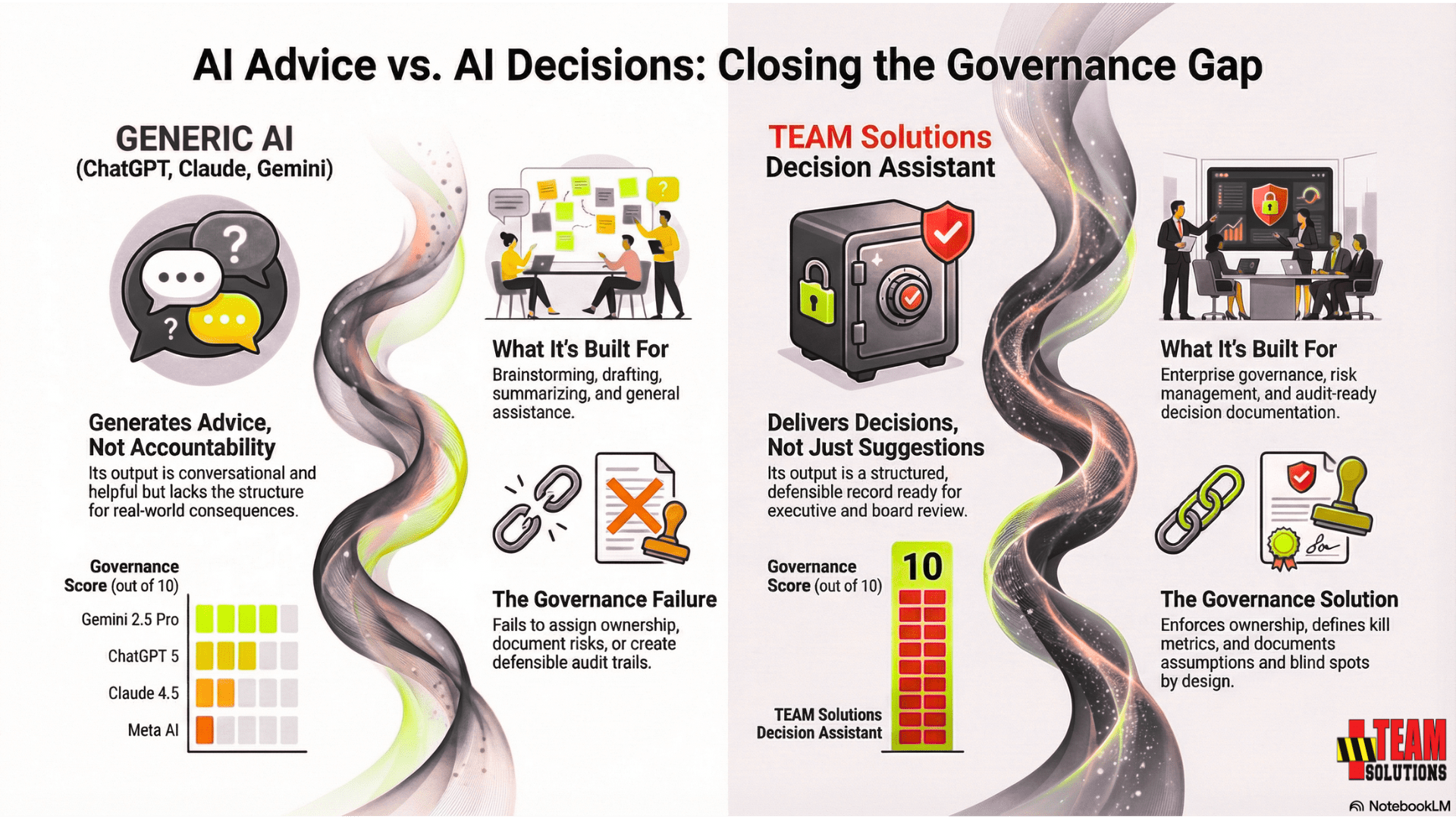

Why Generic AI Fails Governance

Generative AI models are remarkable tools. They write clearly, summarize quickly, and produce analysis that feels polished and authoritative.

None of that means they meet the governance standards required for high-stakes decisions.

These systems were built for interaction, not accountability. They excel at language, not governance. When leaders treat them as decision systems, they expose their organizations to unnecessary risk.

This section explains the core reasons generic models fail governance, regardless of how strong the output appears.

Built for Conversation, Not Responsibility

Systems like ChatGPT, Claude, Gemini, and Meta AI were engineered to provide information, creativity, and general assistance.

They were not engineered to:

- Assign ownership

- Document assumptions

- Track risk boundaries

- Enforce oversight

- Create decision records

- Comply with audit standards

These are governance functions, not language functions. No general model handles them by default.

No Built-In Accountability

AI can generate options and recommendations, but it cannot:

- Claim responsibility

- Accept consequences

- Define escalation paths

- Identify decision owners

Without accountability, leaders cannot rely on the output for decisions that must later be defended.

Hidden Assumptions and Blind Spots

AI output feels complete, but it hides critical information:

- What data the model weighted

- What it ignored

- What it misinterpreted

- What uncertainties were present

- Where assumptions replaced facts

Executives cannot defend a decision if the assumptions behind it are invisible.

Governance requires transparency. Generic AI produces the opposite.

Risk Treated as Narrative, Not Structure

When AI mentions risks, it presents them as part of a paragraph, not as part of a structured risk model.

Generic AI does not:

- Prioritize risks

- Tie risks to scenarios

- Align risks with mitigation steps

- Assign owners

- Define validation triggers

This weakness becomes obvious the moment the output is reviewed by a risk officer, compliance team, or auditor.

No Scenario Range

Executives make decisions based on range, not single-path answers.

Generic AI tends to:

- Provide one recommendation

- Overlook best-case and worst-case scenarios

- Skip variance analysis

- Miss risk boundaries

- Avoid quantifying impacts

Boards expect scenario range. Generic AI does not provide it unless heavily prompted, and even then it lacks structure.

No Review Gates or Timelines

AI cannot determine:

- When a decision must be revisited

- Which conditions trigger reassessment

- What data must be reviewed

- Who is responsible for oversight

This creates drift. Drift destroys governance.

No Kill Metrics

Kill metrics are the thresholds where action must stop.

Generic AI does not create:

- Stop conditions

- Risk limits

- Failure triggers

- Escalation rules

Without kill metrics, decisions can continue long after they should have been paused.

Not Designed for HITL (Human in the Loop)

HITL is mandatory for regulated, financial, HR, legal, and operational decisions.

Generic AI does not:

- Require human oversight

- Warn when oversight is needed

- Document where human authority is required

- Identify what cannot be delegated

AI is helpful, but it is not a governance actor. If HITL is not explicit, organizations take on silent risk.

No Audit Trail

Generic AI leaves organizations with:

- No record of assumptions

- No version history

- No decision log

- No rationale behind recommendations

- No documentation that can survive a review

Boards and regulators do not accept decisions built on disappearing information.

Governance requires evidence. Generic AI provides none.

Executive Output Is Too Casual or Too Narrative

Models often deliver:

- Conversational tone

- Narrative thinking

- Excessive detail

- Vague role boundaries

- Soft language

- Overly confident statements

Executives do not present decisions this way. Boards will not accept decisions written this way. Auditors cannot evaluate decisions framed this way.

Generic AI produces content. Governance requires discipline.

Summary of Governance Failures

Generic AI is powerful, but it cannot meet the minimum governance requirements:

- No ownership

- No assumptions

- No risk structure

- No scenario range

- No oversight

- No kill metrics

- No review gates

- No audit trail

Executives who rely on generic AI for high-stakes decisions are not improving quality. They are accelerating exposure.

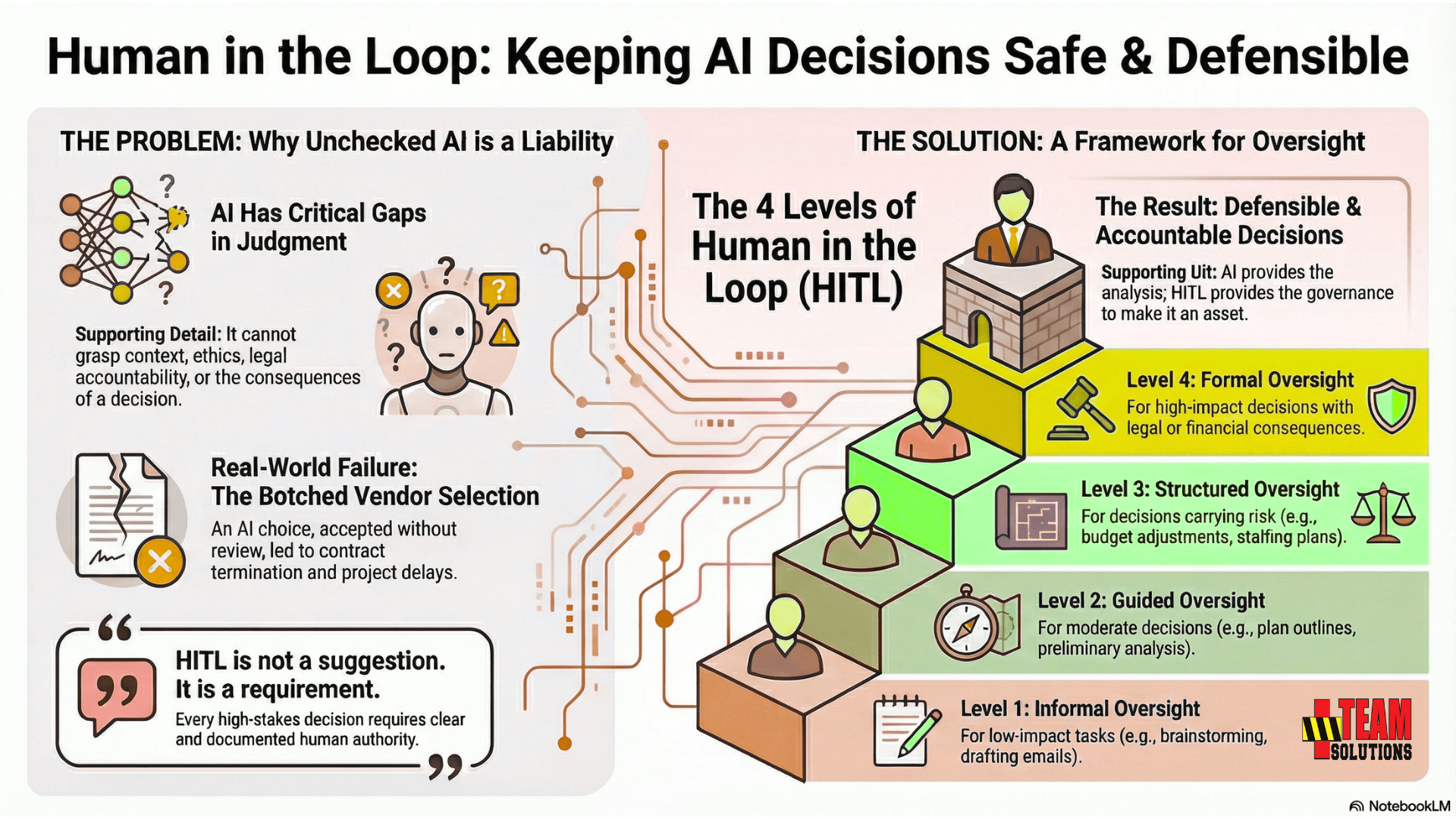

The Role of Human in the Loop (HITL)

Every high-stakes decision requires clear human authority. AI does not replace that responsibility. It does not see context, understand political realities, interpret organizational priorities, or carry accountability for outcomes.

This is why HITL is not a suggestion. It is a requirement.

What is HITL?

Human in the Loop is the oversight structure that ensures decisions influenced by AI still meet legal, operational, ethical, and governance standards.

Without HITL, organizations drift into a model where responsibility is confused, documentation is incomplete, and decisions cannot be defended when challenged.

What HITL Actually Means

HITL is the active involvement of a responsible human at key points in the decision process.

It is not passive review. It is not a casual glance at the output.

It is an explicit declaration that:

- A human owns the decision

- A human validates assumptions

- A human interprets risk

- A human defines boundaries

- A human approves the final direction

HITL is the mechanism that keeps AI from overstepping its role.

Why HITL Cannot Be Optional

HITL is mandatory because AI lacks:

- Legal accountability

- Operational authority

- Ethical judgment

- Contextual understanding

- Real-world constraints

- Awareness of organizational dynamics

- The ability to accept consequences

These gaps create exposure in environments where decisions have lasting effects.

Regulators, auditors, and governance committees expect human oversight for decisions that carry risk. This expectation does not disappear simply because AI assisted the process.

The HITL Failure Pattern

Organizations that skip HITL experience predictable failures:

By the time a failure surfaces, the organization cannot reconstruct what happened.

Real-World Example: The Vendor Selection Without Human Oversight

A technology company used AI to evaluate vendor proposals for a new CRM system. The AI analyzed pricing, feature sets, implementation timelines, and customer reviews, then recommended Vendor B over Vendor A.

The procurement team accepted the recommendation without additional review and moved forward with contract negotiations.

Two weeks into implementation, the IT team discovered that Vendor B's system was incompatible with the company's existing security architecture - a constraint that had not been included in the AI's analysis.

The company faced:

- Contract termination costs

- Delayed CRM implementation

- Emergency vendor re-evaluation

- Board scrutiny over procurement controls

The AI analysis was thorough within its parameters. But no human had validated whether those parameters covered all critical constraints. HITL would have caught the gap before contracts were signed.

The HITL Hierarchy: Four Levels of Oversight

Not all decisions carry the same level of risk. HITL needs structure. Mature organizations establish a hierarchy that defines when oversight is required.

Level 1 - Informal Oversight

For low-impact tasks:

- AI ideas are reviewed

- A human confirms usability

- No material consequences

Examples: Drafting emails, summarizing notes, brainstorming.

Level 3 - Structured Oversight

For decisions that carry risk:

- Assumptions documented

- Risks identified

- Scenarios created

- Mitigation defined

- Decision owner assigned

Examples: Budget adjustments, staffing plans, compliance interpretations.

Level 2 - Guided Oversight

For moderate decisions:

- A human validates facts

- A human checks assumptions

- A human approves next steps

Examples: Operational recs, plan outlines, preliminary analysis.

Level 4 - Formal Oversight

For decisions with legal, financial, or organizational impact:

- Full decision brief

- Review gates

- Kill metrics

- Oversight chain documented

- Audit-ready output prepared

Examples: Executive hiring, restructuring, financial exposure, crisis decisions.

Most organizations do not define this hierarchy. Without it, oversight becomes inconsistent and leaders assume AI can be trusted where it should not.

HITL Protects the Organization

HITL is not a speed bump. It is a safeguard.

It prevents:

When AI speeds up analysis, HITL ensures the organization does not speed up into a bad decision.

HITL Sets the Standard for Defensibility

Executives know that decisions are always judged in hindsight.

When reviewed, the only questions that matter are:

HITL is the structure that answers those questions clearly.

HITL and AI Must Work Together

AI provides analysis. HITL provides governance.

Together, they create decisions that are:

Without HITL, AI-assisted decisions are exposed.

With HITL, AI becomes an asset instead of a liability.

Risk, Liability, and Duty of Care

AI-assisted decisions carry the same consequences as traditional decisions. What changes is the speed, volume, and complexity of the information feeding them.

When organizations rely on AI without the governance controls required for high-stakes environments, they create a new category of risk that is often invisible until it becomes a problem.

Executives remain accountable for every decision made under their authority, regardless of how much AI assisted the process. The legal, financial, operational, and reputational exposure does not diminish simply because a model generated the initial analysis.

Duty of care does not transfer to a tool. It remains with the leader.

This section outlines the risks organizations face when AI-assisted decisions are made without proper governance.

Legal Disclaimer

This guide provides principles for governance and decision-making discipline. It does not constitute legal advice. Organizations should consult qualified legal counsel for jurisdiction-specific requirements, regulatory compliance, and liability considerations related to AI use in their specific contexts.

The New Exposure Categories

AI introduces several forms of risk that are not immediately obvious to leaders:

1. Assumption Risk

Models appear confident even when their reasoning is based on assumptions that are wrong, incomplete, or unstated.

This risk leads directly to:

- Flawed conclusions

- Premature recommendations

- Inaccurate scenario framing

Without documented assumptions, the organization cannot defend the decision later.

2. Accountability Risk

If AI generates the recommendation and no one claims ownership, then no one is accountable.

This creates:

- Unclear responsibility

- Inconsistent execution

- Poor alignment

- Finger-pointing when outcomes go wrong

Boards do not accept decisions without clear ownership.

3. Documentation Risk

AI produces content, not records.

Without governance, decisions are made with:

- No paper trail

- No rationale

- No decision journal

- No ability to reconstruct events

Audit failures originate in undocumented decisions.

4. Oversight Risk

AI does not ask for human review. It does not warn leaders that HITL is required. Lack of oversight becomes a direct liability.

5. Scenario Risk

AI often delivers a single recommended path. Executive decisions require best, likely, and worst scenarios. Missing scenarios lead to unprepared teams and unmanaged consequences.

6. Risk Integration Failure

AI may list risks in a paragraph, but it does not:

- Prioritize them

- Connect them to triggers

- Tie them to mitigation plans

- Assign ownership

This produces the illusion of risk awareness without actual risk control.

7. Legal and Compliance Risk

Regulators expect a defensible process.

AI-generated content does not satisfy:

- HR due diligence

- Financial controls

- Compliance interpretations

- Legal risk frameworks

Without governance, AI-assisted decisions become potential violations.

How AI Weakens Traditional Controls

AI changes decision patterns in ways traditional governance does not anticipate:

Traditional governance was built for slower, manual decision cycles. AI accelerates everything, including mistakes.

The Liability Chain Still Points to the Leader

When a decision goes wrong, the people reviewing it ask:

No investigator, auditor, or court accepts the explanation: "AI suggested it."

The liability chain always points back to the human decision owner. This does not change because AI is involved.

The Board View of AI-Assisted Decisions

Boards do not evaluate decisions based on how impressive the analysis looked.

They evaluate decisions based on:

AI does not inherently provide any of these. Governance must.

Boards are beginning to ask direct questions:

If the organization cannot answer these questions, it is already exposed.

Internal Audit and Regulatory Expectations

Audit teams require:

AI provides none of these on its own.

Regulatory bodies treat AI-assisted decisions as decisions made by humans, not as automated events.

The expectation is simple: If AI assisted the decision, the governance must be even stronger, not weaker.

Real-World Example: The HR Decision That Became a Legal Case

A retail organization used AI to analyze performance data and recommend candidates for a reduction-in-force during a restructuring. The AI identified employees based on productivity metrics, attendance patterns, and tenure.

HR leadership reviewed the list and approved the recommendations without additional analysis.

Three months later, a former employee filed a discrimination claim, alleging age bias in the selection process. During discovery, the organization could not produce:

- The assumptions behind the AI's weighting of performance factors

- Documentation of how the model handled protected class considerations

- Evidence of human review beyond cursory approval

- The decision rationale for each individual termination

The case settled. The settlement included provisions requiring the organization to implement formal governance controls around AI-assisted HR decisions.

The AI had not been intentionally biased. But the organization had no governance process to validate fairness, document assumptions, or ensure human oversight. The lack of governance turned a workforce decision into a liability.

The Real Cost of Ungoverned AI Decisions

Organizations underestimate the cost of governance failures. These failures surface as:

Many of these are expensive.

Some are existential.

All of them are preventable with proper governance.

Duty of Care for Modern Leaders

Duty of care in the AI era requires more than understanding the technology.

It requires:

- Knowing where AI is used

- Understanding what AI cannot do

- Enforcing oversight

- Ensuring traceability

- Preventing misuse

- Anchoring decisions in structure

Leaders are responsible for ensuring that AI does not outpace their governance.

Duty of care means building systems that match AI's speed with executive discipline.

The Governance Imperative

- AI adoption will continue accelerating. Governance must accelerate with it.

- Executives who fail to govern AI-assisted decisions inherit silent risk.

- Executives who build governance systems gain clarity, defensibility, and organizational confidence.

The choice is simple: Govern the decision process, or answer for it later.

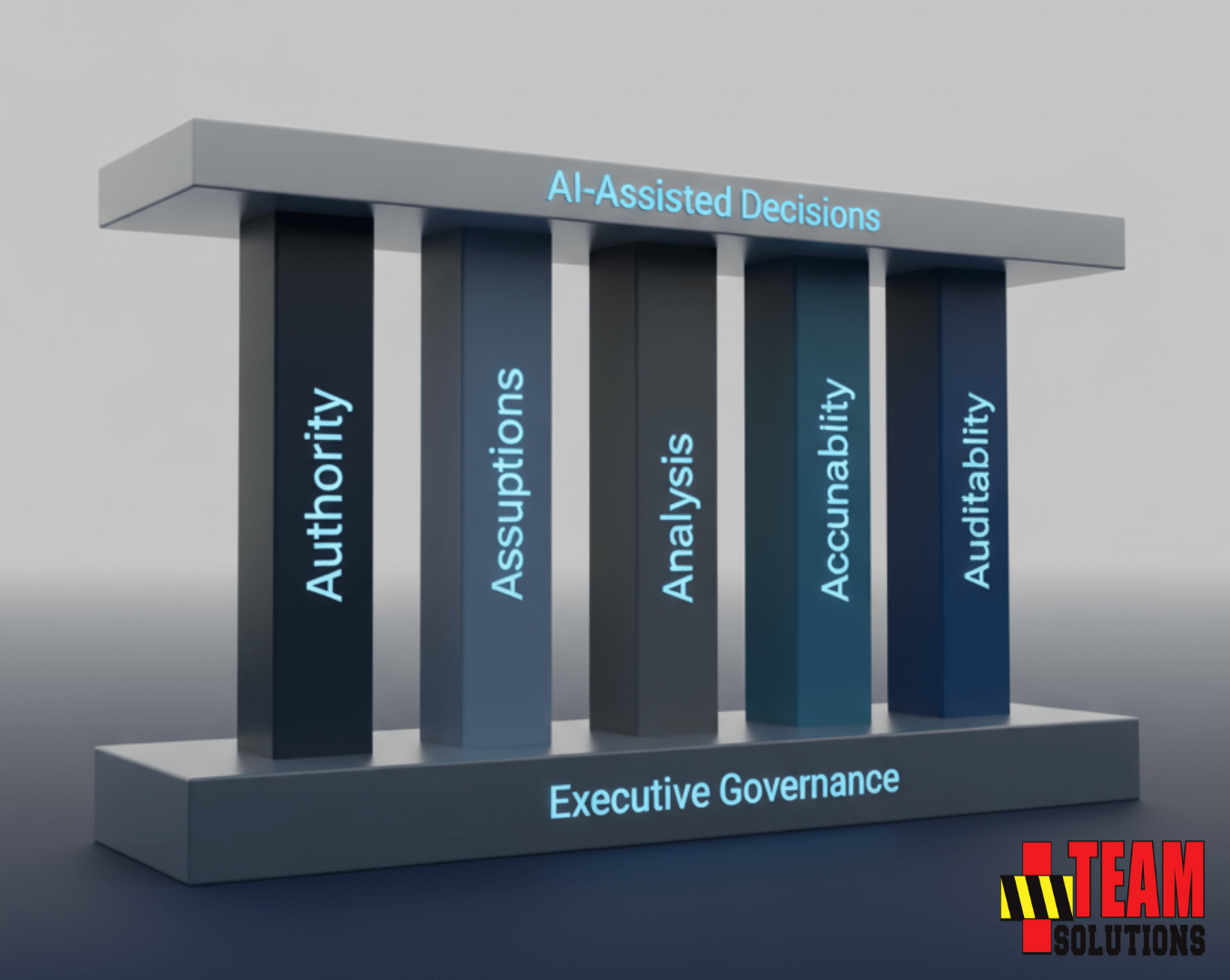

AI Governance vs. AI Decision Governance

AI governance frameworks like the widely-adopted pillars of fairness, transparency, accountability, privacy, and reliability focus on building responsible AI systems. Those principles are essential for ensuring AI behaves ethically and operates safely. But they don't solve the Governance Gap.

An executive using a perfectly ethical, transparent AI tool to make a $10M investment decision still needs frameworks to ensure that decision is defensible.

The AI system may be fair and reliable, but the decision itself requires Authority, Assumptions, Analysis, Accountability, and Auditability structures to withstand board review, audit scrutiny, and regulatory inquiry.

One set of pillars builds trustworthy AI.

The other builds defensible decisions.

The Five-Pillar Governance Framework

AI can accelerate analysis, but only governance makes decisions defensible.

Executives cannot rely on polished language or confident output. They need a structure that forces clarity, exposes assumptions, defines accountability, and records the reasoning behind high-stakes choices.

The Five-Pillar Governance Framework is the foundation.

It provides the minimum standard required to govern any AI-assisted decision, regardless of complexity, industry, or tool. These pillars turn fast information into a controlled decision process that can stand up to scrutiny.

The Five Pillars

- Authority

- Assumptions

- Analysis

- Accountability

- Auditability

Each pillar addresses a specific governance weakness created by generic AI. Together, they eliminate the Governance Gap.

Note: This framework complements the McKenna 4AID Decision Model. The 4AID model structures how leaders think through decisions. The Five Pillars structure how those decisions are governed.

The Five Pillars of AI Decision Governance were synthesized entirely from Mike McKenna’s governance philosophy and decision-making principles. The structure was created with the help of AI as an original distillation of themes across Mike’s existing models, tools, and governance requirements.

Pillar One: Authority

Authority answers the most important question in any AI-assisted decision: Who is responsible for the final call.

AI cannot accept responsibility, so governance must define:

- The decision owner

- The decision scope

- Who approves the final direction

- Who is accountable for outcomes

- Who controls escalation

Authority removes ambiguity.

When ownership is unclear, decisions drift, teams stall, and accountability disappears.

A decision without a named owner is a decision that cannot be defended.

Pillar Two: Assumptions

Assumptions drive more decisions than data.

AI output is built on statistical guesswork, not explicit logic. Without documenting assumptions, organizations lose:

- Why the decision made sense at the time

- What information the model relied on

- What uncertainties were known

- What gaps existed in data or context

- What conditions were believed to be true

Executives must record assumptions before action begins.

When assumptions are missing, decisions cannot be reconstructed or justified under review.

Assumptions are where decisions fail.

Governance ensures they are exposed, challenged, and documented.

Pillar Three: Analysis

Analysis is more than a paragraph of pros and cons. It requires disciplined structure.

Every AI-assisted decision must include:

- Risk identification

- Risk prioritization

- Scenario range

- Best, likely, and worst outcomes

- Impact assessment

- Mitigation plans

- Validation triggers

Generic AI produces unstructured analysis. Governance transforms it into a decision-ready model.

Executives need clarity about what could happen, not just what the model suggests.

Pillar Four: Accountability

Accountability defines the oversight that ensures decisions stay aligned with reality.

It includes:

- Review gates

- Kill metrics

- Escalation paths

- Responsible parties

- Oversight timing

- Human in the loop controls

AI does not build accountability into its output. Governance must.

Without accountability, decisions continue even when conditions change, risks grow, or assumptions collapse.

Oversight is what prevents minor issues from becoming organizational failures.

Governance demands control, not autopilot.

Pillar Five: Auditability

Every major decision eventually gets questioned.

Auditability ensures the organization can produce a complete record that answers:

- Who decided

- Why the decision was made

- What information was used

- What assumptions existed

- What risks were known

- What scenarios were considered

- What oversight was applied

- What stop conditions were established

Generic AI provides no decision record. Governance creates one.

Auditability is not optional. It protects the organization, the executive, and the people affected by the decision.

How the Five Pillars Work Together

When combined, the pillars create a complete decision governance system:

- Authority anchors responsibility

- Assumptions reveal the truth behind the logic

- Analysis creates clarity and structure

- Accountability enforces oversight

- Auditability preserves the record

This is the framework that turns AI-assisted insights into controlled, defensible decisions.

The Framework Eliminates the Governance Gap

Without this framework, organizations face:

With the framework, AI becomes an asset instead of a liability.

Governance is what separates informed decisions from exposed decisions.

Governance Integration Guide: Fitting Into Existing Structures

The Five Pillars framework does not replace existing governance structures. It integrates with them.

Organizations already have boards, risk management systems, quality processes, and internal audit functions. The framework strengthens these structures by ensuring AI-assisted decisions meet the standards these oversight bodies already require.

Boards expect three things from management: clear accountability for major decisions, documented reasoning that can withstand scrutiny, and early warning when assumptions change or risks materialize.

The Five Pillars framework delivers exactly what boards need without creating additional reporting burden.

- Authority clarifies who is accountable for AI-assisted decisions before the board asks. When a decision comes under review, the board knows exactly who owns it and who approved it.

- Assumptions provide the context boards need to evaluate whether management's reasoning was sound at the time. When conditions change, documented assumptions allow the board to distinguish between bad luck and bad judgment.

- Analysis structures risk assessment in the format boards expect: identified risks, prioritized threats, scenario range, mitigation plans, and validation triggers. This is not new work. It is the same analysis boards already require, applied consistently to AI-assisted decisions.

- Accountability defines the oversight mechanisms boards demand: review gates, kill metrics, escalation paths, and responsible parties. These controls ensure management is monitoring decisions actively, not passively hoping for success.

- Auditability creates the complete decision record that boards can review months or years after a decision was made. When the board asks "why did we think this would work?", the framework ensures documentation exists to answer that question.

Board Committee Alignment:

- Audit Committee: Receives decision records demonstrating proper internal controls, documented assumptions, and audit trail preservation

- Risk Committee: Reviews structured risk analysis, kill metrics, and mitigation plans from major AI-assisted decisions

- Compensation Committee: Uses decision governance records to evaluate executive performance and accountability

- Governance Committee: Assesses whether AI decision-making processes meet fiduciary standards

Quarterly Dashboard Format:

Boards do not need details on every AI-assisted decision. They need aggregate visibility into governance health:

- Number of material decisions governed using the framework

- Percentage of decisions with complete Five Pillars documentation

- Decisions reversed or modified based on kill metric triggers

- Average time from decision to review gate checkpoint

- Audit findings related to AI-assisted decision governance

This dashboard takes five minutes to present and gives the board confidence that AI is being used responsibly.

The Five Pillars framework strengthens ERM by ensuring AI-assisted decisions flow through existing risk identification, assessment, and mitigation processes.

Most organizations already have enterprise risk frameworks—COSO, ISO 31000, or proprietary models. The challenge is that AI-assisted decisions often bypass these frameworks because they feel fast, informal, or analytical rather than strategic.

The framework closes this gap by making risk governance mandatory for any AI-assisted decision above a defined materiality threshold.

Integration Points:

- Risk Identification: The Analysis pillar forces explicit documentation of risks before action begins, feeding directly into the organization's risk register.

- Risk Assessment: Scenario analysis, probability evaluation, and impact assessment required by the Analysis pillar align with standard ERM risk rating methodologies.

- Risk Mitigation: Mitigation plans, validation triggers, and kill metrics required by the Accountability pillar become part of the organization's risk treatment strategy.

- Risk Monitoring: Review gates and escalation paths required by the Accountability pillar integrate with existing risk monitoring and reporting cycles.

Compliance Alignment:

Organizations in regulated industries already face compliance requirements around decision-making, internal controls, and documentation. The Five Pillars framework supports compliance rather than creating parallel requirements:

- SOX Compliance: Decision governance creates internal controls over financial decision-making that auditors expect

- GDPR/Privacy Regulations: Auditability pillar ensures AI-assisted decisions affecting personal data have complete documentation

- Industry-Specific Regulations: Healthcare (HIPAA), Financial Services (SEC, FINRA), Energy (FERC) all require documented decision processes that the framework provides

The framework does not ask compliance teams to do new work.

It ensures the work they already require for traditional decisions also applies to AI-assisted decisions.

Organizations with ISO 9001 certification or similar quality management systems already have documented processes, internal audits, and continuous improvement mechanisms.

The Five Pillars framework integrates seamlessly:

- Process Documentation: The framework provides the standard operating procedure for AI-assisted decision-making, meeting ISO 9001 requirements for documented processes.

- Internal Audits: The Auditability pillar creates the records internal auditors need to assess whether AI-assisted decisions meet quality standards.

- Corrective Action: When AI-assisted decisions produce unexpected outcomes, the documented assumptions and analysis allow root cause analysis that feeds into corrective action processes.

- Continuous Improvement: Review gates and kill metrics create feedback loops that drive process refinement over time, exactly as quality management systems require.

- Management Review: The framework generates the decision governance metrics that leadership reviews during periodic management review meetings required by ISO 9001.

Organizations do not need separate governance for AI decisions.

They extend existing quality management practices to include AI-assisted decision-making.

Internal audit teams assess whether management decisions follow established processes, include appropriate controls, and create adequate documentation.

The Five Pillars framework gives internal auditors exactly what they need to evaluate AI-assisted decisions:

- Authority: Auditors can verify that decision ownership was clearly assigned and approved before action began.

- Assumptions: Auditors can assess whether assumptions were documented, tested, and reasonable given the information available at the time.

- Analysis: Auditors can evaluate whether risk identification, scenario analysis, and mitigation planning met organizational standards.

- Accountability: Auditors can confirm that oversight mechanisms were defined, review gates were executed, and escalation paths were followed.

- Auditability: Auditors can access complete decision records without requiring forensic reconstruction.

Audit Questions the Framework Answers:

- Who had authority to make this decision?

- What information was the decision based on?

- What assumptions were made?

- How were risks identified and evaluated?

- What oversight was applied?

- When were review checkpoints conducted?

- What would have triggered reassessment or reversal?

- Can this decision be reconstructed six months later?

Internal audit does not need specialized AI expertise to audit AI-assisted decisions. The framework translates AI decision-making into the same governance standards auditors already apply to traditional decisions.

Self-Assessment vs. Independent Review:

The framework supports both internal self-assessment and independent audit review:

- First Line: Decision owners apply the framework and document compliance

- Second Line: Risk and compliance teams review governance completeness

- Third Line: Internal audit conducts independent assessment of framework effectiveness

This three-lines-of-defense model is standard in risk management. The Five Pillars framework simply ensures AI-assisted decisions flow through it.

Implementation Without Disruption

The most effective governance integration follows this sequence:

- 1Map existing governance requirements - Document what your board, risk management, quality systems, and internal audit already require for material decisions

- 2Identify the gaps - Determine where AI-assisted decisions currently bypass these requirements

- 3Apply the Five Pillars - Use the framework to ensure AI-assisted decisions meet the same standards as traditional decisions

- 4Leverage existing reporting - Add AI decision governance metrics to existing dashboards, risk reports, and audit findings rather than creating new reports

- 5Train once, apply everywhere - Teach decision owners that the Five Pillars framework is not additional governance, it is consistent governance applied to AI-assisted decisions

The framework does not create parallel governance. It eliminates the gap where AI-assisted decisions were escaping governance entirely.

Governance integration is not about adding complexity. It is about ensuring AI decisions receive the same disciplined oversight that every other material decision already receives.

How to Evaluate Any AI System for Decision-Making

Executives cannot rely on intuition when evaluating whether an AI system is appropriate for decision-making.

- The output may look strong.

- The analysis may sound compelling.

- The interface may feel polished.

None of that indicates whether the tool meets the governance requirements necessary for high-stakes environments.

Evaluation must be systematic.

This section provides a clear rubric that leaders can use to determine when AI is appropriate, when it becomes unsafe, and what standards every AI-assisted decision must satisfy.

This evaluation applies to every model in the market, including ChatGPT, Claude, Gemini, and Meta AI, as well as any internal or fine-tuned systems.

The Core Evaluation Question

A simple question defines whether an AI system is safe for decisions:

❓Does this system produce information or does it support governed decisions?

If the system only produces content, summaries, or suggestions, it is not safe for decisions that carry legal, financial, operational, or reputational consequences.

Governance requires structure. AI requires oversight.

Executives must determine whether the system can support both.

The Evaluation Rubric

Below are the twelve criteria that determine whether an AI tool is safe for decision-making.

If the system fails any of these, it cannot be used for material decisions.

How to Score an AI System

A safe AI system must satisfy these criteria:

- 10 to 12 criteria met: Safe for executive governance with oversight

- 7 to 9 criteria met: Safe for operational use, not executive decisions

- 4 to 6 criteria met: Safe for brainstorming only

- 0 to 3 criteria met: Unsafe for organizational use

Most generic AI models score between 1 and 4.

They are designed for assistance, not governance.

When AI Is Appropriate

AI is appropriate for:

These activities do not require the depth of governance that high-stakes decisions demand.

When AI Is Unsafe

AI should not be used as the basis for decisions that involve:

These categories require governance that generic AI cannot provide.

Decision Tree for Leaders

Below is a simple decision tree executives can follow.

? Safe to Proceed

The decision is safe to move forward.

Governance is not complexity. Governance is discipline.

The Practical Outcome

Evaluation is not about whether AI is good or bad.

It is about whether the system supports:

Leaders must evaluate their AI tools with the same standards used for any other high-stakes system.

- If the tool supports governance, it is an asset.

- If it does not, it becomes a risk.

High-Stakes Use Cases: Where AI-Assisted Decisions Break First

AI is already being used across critical domains of organizational leadership. Often, leaders do not realize the stakes until an assumption is wrong, a risk is missed, or a decision is challenged by a board, regulator, legal team, or auditor.

High-stakes decisions require structure. They require oversight. They require documentation. They require governance.

This section identifies the environments where AI creates the highest exposure and explains why generic AI output cannot be trusted without a governance framework around it.

Succession and Leadership Transitions

Succession decisions are among the most politically sensitive and strategically consequential choices an organization makes.

AI cannot:

- Weigh political dynamics

- Evaluate trust relationships

- Interpret historical performance in context

- Understand cultural alignment

- Account for board expectations

Succession decisions require scenario range, risk assessment, stakeholder alignment, and defensible reasoning.

AI can inform, but it cannot guide or govern the decision.

Budgeting and Financial Decisions

Financial decisions carry immediate operational impact and downstream consequences.

AI cannot:

- Assess risk tolerance

- Interpret financial controls

- Apply audit requirements

- Understand regulatory constraints

- Predict cross-departmental effects

Budgets require governance because they influence strategy, resource allocation, and long-term planning.

AI-generated recommendations without governance create significant exposure.

HR and Personnel Actions

Personnel decisions involve legal, ethical, and reputational risk.

AI cannot:

- Interpret intent

- Assess behavioral context

- Weigh political considerations

- Ensure compliance

- Understand historical discipline patterns

HR actions require traceability, defensible reasoning, and alignment with policy.

Generic AI output is not acceptable for decisions that may lead to disputes or litigation.

Legal and Compliance Interpretation

Compliance decisions often determine whether the organization remains within regulatory boundaries.

AI cannot:

- Interpret legal nuance

- Understand jurisdictional context

- Align with internal policies

- Assess the risk of misclassification

- Identify when legal review is mandatory

Compliance interpretations demand governance, documentation, and oversight.

AI output is not a substitute for structured decision-making.

Crisis or Incident Response

Crisis decisions require speed, clarity, and disciplined execution.

AI cannot:

- Understand evolving ground truth

- Account for human factors

- Integrate multi-agency coordination

- Predict cascading operational failures

- Determine acceptable risk thresholds

Crisis leadership demands review gates, kill metrics, and scenario planning.

AI can assist with information, but governance must guide the response.

Operational Planning and Resource Allocation

Operations decisions involve:

- Timing

- Sequencing

- Resource limits

- Cross-team dependencies

- Risk prioritization

AI generates plans without understanding operational friction, cultural norms, or capacity constraints.

Operational decisions require structured oversight and human validation.

Vendor Selection and Strategic Partnerships

Vendor decisions are high-risk because they involve:

- Long-term commitments

- Financial exposure

- Legal obligations

- Integration challenges

- Reputation implications

AI cannot evaluate these elements with the nuance required for strategic choices.

Governance ensures decisions align with strategy and risk posture.

Audit Preparation and Internal Controls

Audits demand:

- Traceability

- Documented assumptions

- Aligned controls

- Consistent logic

- Defensible history

AI can help prepare supporting material, but it cannot produce the governance record auditors expect.

Governance must be structured and deliberate.

Organizational Restructuring

Restructuring decisions involve:

- Workforce shifts

- Stakeholder impact

- Financial modeling

- Legal obligations

- Cultural consequences

AI lacks visibility into organizational dynamics and downstream effects.

Governance ensures leaders understand the full implications before action.

Strategic Direction and Long-Term Planning

Strategy decisions shape the organization for years.

AI cannot:

- Evaluate leadership capacity

- Weigh internal politics

- Predict market reactions

- Align with cultural realities

- Understand investor priorities

Strategic planning must be supported by governance, not driven by generative output.

The Pattern Across All High-Stakes Domains

Every high-stakes decision shares the same vulnerabilities when AI is involved:

These are governance failures, not AI failures.

AI accelerates decision-making. Governance ensures decisions remain safe.

Common Objections & Executive Responses

"This Seems Like Bureaucratic Overhead"

This is the most common objection, usually from executives who equate governance with delay.

Governance is not about slowing decisions. It is about preventing the reversals that cost ten times more than the original decision.

Consider the math. Applying the Five Pillars framework adds 15-20 minutes to a material decision. Unwinding a poorly governed decision takes three to six months, consumes executive attention, burns credibility with the board, and often requires external advisors to reconstruct what actually happened.

The real overhead is not documentation. The real overhead is explaining to your board why a decision that seemed obvious six months ago is now being reversed with no clear accountability trail.

Governance is not bureaucracy. Governance is insurance against expensive mistakes.

"Our Team Already Does This Informally"

Informal governance fails the moment someone asks "how do you know?"

When a decision goes wrong, the board does not accept "we talked about it" as evidence of due diligence. Auditors do not accept "the team was aligned" as proof of oversight. Regulators do not accept "everyone understood the risks" as documentation of accountability.

Informal governance works until it does not. The test is not whether your team feels confident. The test is whether you can produce a complete decision record under review.

If your team already thinks through authority, assumptions, analysis, accountability, and auditability, formalizing the process takes no additional time. It simply creates the record that protects the organization when outcomes differ from expectations.

Informal governance is not governance. It is hope disguised as process.

"We're Too Small to Need This Level of Governance"

Size determines complexity, not accountability.

A $5 million decision at a $50 million company carries the same fiduciary duty as a $50 million decision at a $500 million company. The board's expectations do not scale down because your revenue is smaller.

In fact, smaller organizations face higher governance risk because a single bad decision has disproportionate impact. A poorly governed vendor selection that costs a Fortune 500 company $10 million is a rounding error. That same decision at a mid-market firm can force layoffs, delay growth plans, or trigger covenant violations.

Small organizations also have less margin for error in reputation. One governance failure that becomes public can destroy years of credibility with customers, investors, and talent.

Your size does not reduce your accountability. It increases your exposure.

"AI Is Just Another Tool - We Don't Have 'Spreadsheet Governance'"

This comparison misunderstands the difference between tools that support analysis and tools that generate recommendations.

Spreadsheets display data. They do not tell you what to do. Humans interpret the numbers, evaluate the context, and make the call. The spreadsheet has no opinion.

AI generates confident, persuasive recommendations that can bypass human judgment. The output reads like analysis written by a trusted advisor. It uses authoritative language. It structures arguments. It provides reasons.

This is why AI requires different governance. The risk is not calculation error. The risk is persuasive output that masks weak assumptions, incomplete analysis, or missing accountability.

Generic AI does not say "I do not have enough information to recommend a course of action." It produces an answer regardless of the quality of the input. Governance forces the human decision-maker to validate that answer before acting on it.

Spreadsheets assist. AI persuades. That difference demands governance.

"This Will Slow Us Down When We Need to Move Fast"

Speed without governance is recklessness disguised as agility.

The framework adds 15-20 minutes to document authority, assumptions, analysis, accountability, and auditability. If a material decision cannot withstand 20 minutes of structured review, it should not be made.

The executives who claim governance slows them down are the same executives who spend three months unwinding decisions that were made too fast. Ask them to calculate the cost of those reversals. The answer is always higher than the 20 minutes they refused to spend upfront.

Fast decisions are not inherently better decisions. Fast decisions with clear ownership, documented assumptions, structured risk analysis, defined oversight, and preserved records are better decisions.

Governance does not slow you down. It prevents you from having to back up.

"Our Team Is Smart Enough Without Frameworks"

Intelligence does not eliminate bias, blind spots, or groupthink.

Smart teams make bad decisions when:

- Everyone assumes someone else validated the assumptions

- The loudest voice in the room becomes the de facto decision owner

- Optimism bias dominates risk assessment

- No one wants to be the person who slows momentum

- Social pressure discourages challenges to the emerging consensus

The framework eliminates these failure modes by forcing explicit answers to explicit questions. Who owns this decision. What are we assuming to be true. What risks exist. Who provides oversight. How will we know if we were wrong.

Smart teams using structured governance make better decisions than smart teams relying on intuition and informal process.

Talent without discipline produces expensive mistakes. Talent with governance produces defensible decisions.

"This Is Overkill for Our Decisions"

If a decision is not material enough to govern, it is not material enough to involve AI.

The framework is designed for decisions that carry financial, legal, operational, or reputational consequences. These are decisions that:

- Exceed a defined dollar threshold